The Linux File System Hunting Expedition

What the filesystem reveals when you stop using it and start reading it.

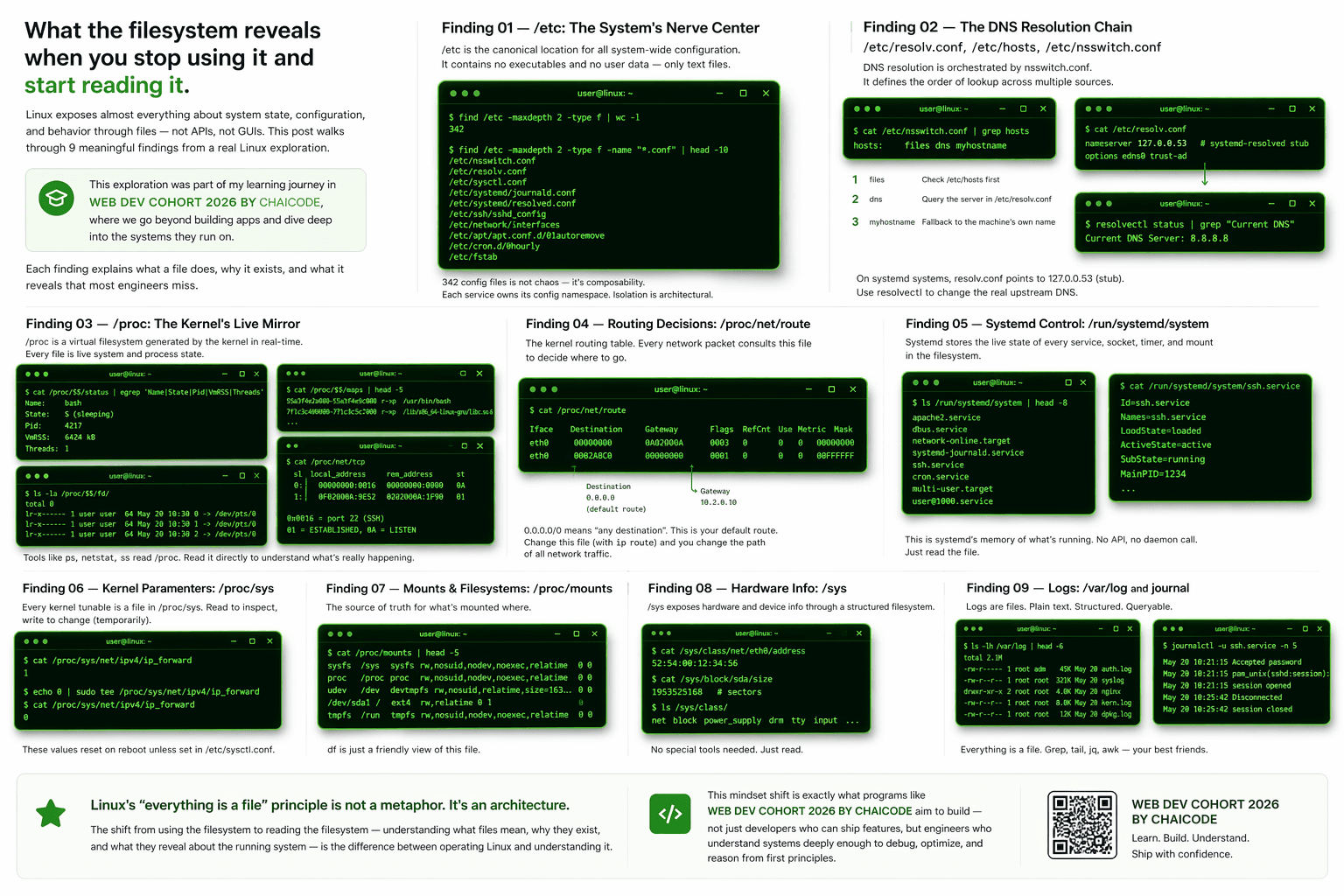

Linux exposes almost everything about system state, configuration, and behavior through files — not APIs, not GUIs. This post walks through 9 meaningful findings from a real Linux exploration: how DNS actually resolves, what /proc exposes about live processes, how routing decisions are made in the kernel, how systemd controls your boot, and more. Each finding explains what a file does, why it exists, and what it reveals that most engineers miss.

This post assumes familiarity with basic Linux usage. It is aimed at engineers who want to understand what's happening under the hood — not just which commands to run.

Problem

Most engineers interact with Linux through commands — systemctl, ip, ps, top. These tools work, but they hide the actual source of truth. Every one of those commands is ultimately reading a file somewhere in the filesystem.

When something breaks in production — a DNS failure, a routing anomaly, a service that won't start — the engineers who resolve it fastest are not the ones who know the most commands. They're the ones who know where the system keeps its state and how to read it directly.

This is a hunting expedition through that state.

Finding 01 — /etc: The System's Nerve Center

What it does

/etc is the canonical location for all system-wide configuration. It contains no executables and no user data — only text files that define how the system and its services behave.

Why it exists

Unix's design separates binaries (in /bin, /sbin) from configuration. This means you can reinstall a service without losing its config, or audit the entire system state by reading plain text. No registry. No binary blobs.

What I found

find /etc -maxdepth 2 -type f | wc -l

# 342

find /etc -maxdepth 2 -type f -name "*.conf" | head -10

# /etc/nsswitch.conf

# /etc/resolv.conf

# /etc/sysctl.conf

# /etc/systemd/journald.conf

# /etc/systemd/resolved.conf

# /etc/ssh/sshd_config

# /etc/network/interfaces

342 config files is not chaos — it's composability. Each service owns its config namespace. A bad config in /etc/nginx/nginx.conf cannot affect /etc/ssh/sshd_config. The isolation is architectural, not accidental.

Why it matters

There is no central config API to corrupt. When you want to understand how any service behaves, you read one file. When you want to audit a system, you diff /etc against a known-good baseline. Tools like etckeeper (which version-controls /etc with git) exist precisely because this directory is the source of truth for the entire system's behavior.

Finding 02 — The DNS Resolution Chain: /etc/resolv.conf, /etc/hosts, /etc/nsswitch.conf

What it does

Most engineers know resolv.conf holds the DNS server IP. What's less understood is that it's only one node in a lookup chain orchestrated by nsswitch.conf.

Why it exists

DNS is not the only way to resolve a hostname. The system might check local files first, query LDAP in a corporate environment, or use mDNS for local network discovery. nsswitch.conf (Name Service Switch) is the configuration layer that defines this chain.

What I found

cat /etc/nsswitch.conf | grep hosts

# hosts: files dns myhostname

That single line controls the entire DNS resolution order:

files — check

/etc/hostsfirstdns — query the server listed in

/etc/resolv.confmyhostname — fall back to the machine's own configured name

Change the order and you change how every hostname resolves on the machine.

cat /etc/resolv.conf

# nameserver 127.0.0.53 <- systemd-resolved stub listener

# options edns0 trust-ad

# The real upstream DNS lives here:

resolvectl status | grep "Current DNS"

# Current DNS Server: 8.8.8.8

The insight

On systemd systems, resolv.conf often points to 127.0.0.53 — a local stub resolver run by systemd-resolved. If you edit resolv.conf directly thinking you're changing your DNS, you're actually reconfiguring the stub's behavior. The real upstream is configured through systemd-resolved.

On Ubuntu 20.04+, /etc/resolv.conf is a symlink to /run/systemd/resolve/stub-resolv.conf. Overwriting it manually gets reverted on the next network event. Use resolvectl instead.

Common trap: Many engineers have spent hours "fixing" DNS by editing

resolv.conf, watching it work, then watching it break again after a DHCP lease renewal. The symlink is why.

Finding 03 — /proc: The Kernel's Live Mirror

What it does

/proc is a virtual filesystem — it has no entries on disk. It exists purely in RAM, generated on-the-fly by the kernel whenever you read from it. Every file in /proc is live, real-time kernel and process state.

Why it exists

Before /proc, getting process information required privileged kernel calls. /proc democratized this: any process can read its own state (and with appropriate permissions, the state of others) by opening a file. It made the kernel inspectable without a debugger.

What I found

# Read your current shell's process state

cat /proc/$$/status

# Name: bash

# State: S (sleeping)

# Pid: 4217

# VmRSS: 6424 kB <- actual RAM used right now

# Threads: 1

# See every open file descriptor of the current process

ls -la /proc/$$/fd/

# 0 -> /dev/pts/0 (stdin)

# 1 -> /dev/pts/0 (stdout)

# 2 -> /dev/pts/0 (stderr)

# See the memory map — every loaded library

cat /proc/$$/maps | head -5

# 55a3f4e2a000-55a3f4e9c000 r-xp /usr/bin/bash

# 7f1c3c400000-7f1c3c5c7000 r-xp /lib/x86_64-linux-gnu/libc.so.6

The most underused file in /proc:

cat /proc/net/tcp

# sl local_address rem_address st

# 0: 00000000:0016 00000000:0000 0A <- port 22, LISTEN

# 1: 0F02000A:9E52 0202000A:1F90 01 <- ESTABLISHED connection

# Addresses are little-endian hex. 0x0016 = port 22 (SSH)

The insight

Tools like netstat, ss, top, and ps are not special — they read /proc and format the output. If those tools were unavailable (forensics on a compromised machine, or a minimal container), you can reconstruct everything from raw /proc reads. This is exactly how forensic and EDR tools work.

Finding 04 — Routing Table Inspection via /proc/net/route

What it does

Every packet leaving a machine passes through the kernel's routing table. Before ip route existed, the routing table was always accessible at /proc/net/route.

Why it exists

The routing table is kernel state. /proc/net/route is simply the kernel exposing that state as a file — consistent with the everything-is-a-file philosophy.

What I found

cat /proc/net/route

# Iface Destination Gateway Flags Metric Mask

# eth0 00000000 0101A8C0 0003 100 00000000 <- default gateway

# eth0 0001A8C0 00000000 0001 100 00FFFFFF <- local subnet

# Decoded: 0101A8C0 = 192.168.1.1 (little-endian hex)

# Flag 0003 = RTF_UP | RTF_GATEWAY — this is the default route

# Same info, human-readable:

ip route show

# default via 192.168.1.1 dev eth0 proto dhcp metric 100

# 192.168.1.0/24 dev eth0 proto kernel scope link src 192.168.1.15

You can also inspect the ARP cache without any tools:

cat /proc/net/arp

# IP address HW type Flags HW address Device

# 192.168.1.1 0x1 0x2 aa:bb:cc:dd:ee:ff eth0

The insight

When you add a route with ip route add, it writes into kernel memory and is immediately reflected in /proc/net/route. There is no config file involved — the kernel IS the truth. Combined with /proc/net/tcp and /proc/net/arp, you have a complete network stack picture without installing a single diagnostic tool.

Finding 05 — User Management: /etc/passwd vs /etc/shadow

What it does

/etc/passwd maps usernames to UIDs, home directories, and shells. /etc/shadow stores password hashes. They were originally one file — understanding why they split reveals a fundamental security lesson.

Why it exists

Originally, Unix stored password hashes in /etc/passwd — a world-readable file (any user could read it). This meant any local user could copy all password hashes and run offline cracking attacks. /etc/shadow was introduced to separate credentials from identity.

What I found

cat /etc/passwd | grep -v nologin | grep -v false

# root:x:0:0:root:/root:/bin/bash

# ubuntu:x:1000:1000:Ubuntu:/home/ubuntu:/bin/bash

# Fields: username:password_placeholder:UID:GID:GECOS:home:shell

# The 'x' means: the real hash is in /etc/shadow

sudo cat /etc/shadow | grep ubuntu

# ubuntu:\(6\)rounds=4096\(salt\)hash...:19234:0:99999:7:::

# \(6\) = SHA-512 hash algorithm

# 19234 = days since Unix epoch when password was last changed

# 99999 = max days before forced change (effectively never)

# 7 = warn user 7 days before expiry

# Permissions reveal the design:

ls -la /etc/passwd /etc/shadow

# -rw-r--r-- 1 root root /etc/passwd <- world-readable

# -rw-r----- 1 root shadow /etc/shadow <- root + shadow group only

The insight

UIDs below 1000 in /etc/passwd are system accounts: daemon, www-data, nobody. They exist to run services with minimal privilege. A web server running as UID 33 (www-data) cannot read /etc/shadow, write to /home, or escalate without an explicit privilege vulnerability.

The UID system IS the permission model. There is no separate ACL engine — file ownership and the three permission bits (rwx) enforced by the kernel against UIDs are the entire foundation.

Finding 06 — Live Kernel Tuning via /proc/sys

What it does

/proc/sys exposes kernel tunables as files. Reading a file shows the current value. Writing to it changes kernel behavior immediately — no restart required. This is what sysctl does under the hood.

Why it exists

Kernel parameters like network buffer sizes, TCP behavior, and memory management need to be tunable in production without rebooting. /proc/sys provides a safe, file-based interface that maps directly to kernel variables.

What I found

cat /proc/sys/net/ipv4/ip_forward

# 0 <- IP forwarding disabled; this machine won't route packets

cat /proc/sys/vm/swappiness

# 60 <- kernel starts swapping when ~40% RAM is free

cat /proc/sys/fs/file-max

# 9223372036854775807 <- max open files system-wide

cat /proc/sys/kernel/hostname

# ubuntu-server

# Enable IP forwarding live (e.g., to set up a VPN gateway):

echo 1 > /proc/sys/net/ipv4/ip_forward

# Equivalent to: sysctl -w net.ipv4.ip_forward=1

# To persist across reboots:

echo "net.ipv4.ip_forward=1" >> /etc/sysctl.conf

The insight

The split between /proc/sys (runtime) and /etc/sysctl.conf (persistent) is the source of a recurring production failure pattern: a change is made at runtime during an incident, it works perfectly, nobody writes it to sysctl.conf, and it silently disappears after the next reboot. The system "breaks again for no reason."

Always treat /proc/sys writes as temporary until you verify the corresponding sysctl.conf entry exists.

Finding 07 — systemd Service Architecture: /lib/systemd/system/ vs /etc/systemd/system/

What it does

systemd uses unit files to define how services start, stop, restart, and depend on each other. These files live in two locations with a deliberate precedence hierarchy.

Why it exists

Package managers install default unit files. System administrators need to override them without touching package-managed files (which would be overwritten on upgrade). The two-location design solves this cleanly.

What I found

| Location | Owner | Purpose | Wins on conflict? |

|---|---|---|---|

/lib/systemd/system/ |

Package manager | Default unit files from packages | No |

/etc/systemd/system/ |

System administrator | Overrides and custom units | Yes — always |

/run/systemd/system/ |

Runtime | Transient units, gone after reboot | Between the two |

cat /lib/systemd/system/ssh.service

# [Unit]

# Description=OpenBSD Secure Shell server

# After=network.target auditd.service

# ConditionPathExists=!/etc/ssh/sshd_not_to_be_run

#

# [Service]

# Type=notify

# ExecStartPre=/usr/sbin/sshd -t <- validates config before start

# ExecStart=/usr/sbin/sshd -D

# ExecReload=/bin/kill -HUP $MAINPID <- graceful reload

# Restart=on-failure

# RestartPreventExitStatus=255

#

# [Install]

# WantedBy=multi-user.target

The override pattern — modify a service without touching the package file:

systemctl edit ssh.service

# Creates: /etc/systemd/system/ssh.service.d/override.conf

# Example — add an environment variable:

# [Service]

# Environment="EXTRA_OPTS=-p 2222"

# See the final merged unit (base + all overrides):

systemctl cat ssh.service

The insight

The drop-in directory pattern (service.d/*.conf) means you can tune any service and survive package upgrades. When the package updates, your overrides survive because they live in /etc, not /lib. This is one of systemd's most useful and most underused features.

The After=network.target line also reveals how systemd builds a dependency graph — services don't just start sequentially, they declare relationships. ConditionPathExists=!/etc/ssh/sshd_not_to_be_run means you can disable SSH just by touching a file. That's policy-as-file.

Finding 08 — /dev: Hardware as Files, and the Kernel Driver Table

What it does

/dev contains device files — the abstraction layer between userspace and hardware. Not just disks and terminals: /dev exposes the kernel's "everything is a file" principle in its most literal form.

Why it exists

Rather than creating separate syscalls for every device type, Unix maps devices to file operations (open, read, write, ioctl). Programs use the same file I/O they already know. The kernel routes these calls to the appropriate driver based on device numbers.

What I found

ls -la /dev/null /dev/zero /dev/random /dev/urandom

# crw-rw-rw- 1 root root 1, 3 /dev/null

# crw-rw-rw- 1 root root 1, 5 /dev/zero

# crw-rw-rw- 1 root root 1, 8 /dev/random

# crw-rw-rw- 1 root root 1, 9 /dev/urandom

# 'c' = character device

# "1, 3" = major:minor — the kernel's index into its driver table

# Major 1 = mem device driver; Minor 3 = /dev/null, 5 = /dev/zero

# Read 500MB of zeros from the kernel — zero disk I/O:

dd if=/dev/zero bs=1M count=500 | wc -c

# 524288000

# Check kernel entropy pool size:

cat /proc/sys/kernel/random/entropy_avail

# 3842 <- bits of entropy available to /dev/random

The /dev/urandom vs /dev/random reality

/dev/random blocks when kernel entropy is low. /dev/urandom never blocks — it uses a CSPRNG seeded by the entropy pool. Since Linux 4.8, /dev/urandom is safe for all cryptographic use except on freshly-booted embedded systems with no entropy sources. The old advice to always use /dev/random for security is outdated and causes unnecessary blocking in production systems.

The insight

When you open /dev/sda, the kernel looks up major number 8, finds the SCSI disk driver, and routes all reads and writes through it. Every open(), read(), write() on a device file is a driver call in disguise. The file abstraction is total — and it means you can interact with hardware using the same tools you use for text files.

Finding 09 — System Logs: /var/log vs journald

What it does

Modern Linux runs two log systems simultaneously: legacy text-file logs in /var/log/ and the binary journal managed by systemd-journald. Understanding both reveals why they coexist and when each matters.

Why both exist

/var/log text files exist for compatibility: tools written before systemd, log shippers (Filebeat, Fluentd), and grep. journald exists because text logs lose structure — you can't efficiently query "all errors from service X between 14:00 and 15:00" in plain text at scale.

What I found

ls -lh /var/log/

# auth.log <- PAM auth, sudo, SSH logins

# syslog <- general system messages

# kern.log <- kernel messages only

# apt/ <- package install/remove history

# journal/ <- binary journal data (journald)

# Real brute-force attempt visible in auth.log:

grep "Failed password" /var/log/auth.log | tail -3

# Apr 18 03:21:44 sshd[12311]: Failed password for root from 45.33.32.156

# Apr 18 03:21:47 sshd[12314]: Failed password for root from 45.33.32.156

# Apr 18 03:21:51 sshd[12317]: Failed password for root from 45.33.32.156

# journald: structured, indexed, queryable:

journalctl -u ssh.service --since "1 hour ago" --no-pager

# Last 20 error-level entries across all services:

journalctl -p err -n 20

# How much disk space the journal is using:

journalctl --disk-usage

# Archived and active journals take up 212.0M in the file system.

cat /etc/systemd/journald.conf | grep -v "^#" | grep -v "^$"

# [Journal]

# SystemMaxUse=500M <- auto-rotate when journal hits 500MB

The insight

The binary journal at /var/log/journal/ stores metadata (service name, PID, priority, timestamp) as structured fields indexed for fast retrieval. This is why journalctl -u nginx -p err --since yesterday is orders of magnitude faster than grep ERROR /var/log/syslog.

A non-obvious trap: on minimal installs, journald writes to memory at /run/log/journal/ — which disappears on reboot. Logs vanish after every restart. To make the journal persistent, you create the directory manually:

mkdir -p /var/log/journal

systemd-tmpfiles --create --prefix /var/log/journal

This catches engineers off guard when incident logs are gone after a server restart.

Results

After this exploration, the pattern that emerges is consistent:

Configuration → plain text in

/etc, composable, diffable, version-controllableRuntime state → virtual files in

/procand/sys, live kernel data with zero disk I/OHardware → device files in

/dev, driver calls disguised as file operationsLogs → two parallel systems for different access patterns

Every production tool you use — ps, netstat, sysctl, ip route, top — is a reader of these files. Understanding the source gives you the ability to work when the tools aren't available, debug when behavior is unexpected, and audit when something has silently changed.

Trade-offs

/procexposes sensitive data. On a multi-user system, process listings and network state are visible to all users by default. Kernel namespaces (used by containers) restrict this.Direct writes to

/proc/sysare immediate and have no undo. A wrong value forvm.overcommit_memoryornet.core.somaxconncan degrade the system instantly.journald's binary format is not human-readable withoutjournalctl. If journald itself crashes, those logs may be unrecoverable — which is why the text fallback in/var/log/syslogstill matters.

Conclusion

Linux's "everything is a file" principle is not a metaphor. It's an architecture. Every piece of system state, every configuration, every live process, every kernel parameter, every hardware device is reachable through the filesystem.

The shift from using the filesystem to reading the filesystem — understanding what files mean, why they exist, and what they reveal about the running system — is the difference between operating Linux and understanding it.